Image

search sorts by content

Statistics sniff out secrets

Quantum bit withstands noise

Image search sorts by content

Study finds Web quality time

Powerless memory gains time

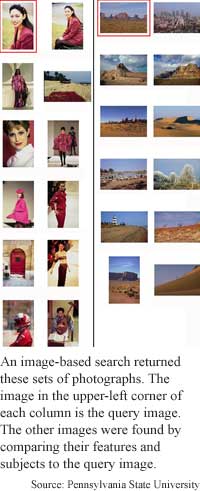

If you’ve ever tried to search for a picture

on the web, you know it’s a hit-and-miss affair. You type in some words,

but only retrieve those images that contain the search terms in their

meta tags or file names.

Some digital image

libraries, on the other hand, let users search on the content of the images

themselves. One problem with these libraries is keeping searches manageable

across thousands of images.

A group of researchers at Pennsylvania State University has come up with

software that maps images’ key features and assigns the images to several

broad categories. The Semantics-sensitive Integrated Matching for Picture

Libraries (SIMPLIcity) system retrieves images by matching the features

and categories of a query image to those of images stored in the database.

The system could cut the time and expense involved in sifting through

large databases of images in biological research, said James Z. Wang,

an assistant professor of Information Sciences and Technology and Computer

Science and Engineering at Pennsylvania State University. It could also

be used to index and recover images in vast museum and newspaper archives,

he said.

To use the program, a user gives it a query image or image URL. The program

indexes the image by converting it to a common format and extracting signature

features, such as color, texture and shape of certain segments. These

features are stored in a features database while the whole image is relegated

to a system database.

Images are also classified semantically - as graph or photograph, textured

or non-textured, indoor or outdoor, and objectionable or benign, he said.

“We first extract features. Then we use semantics classification to classify

images into categories. Then within each category, we retrieve images

based on their features,” Wang said.

After feature extraction and classification, the program “can tell that

a picture is of a certain semantic class, such as photograph, clip art,

indoor, [or] outdoor,” he said. Although it is still impossible for it

to tell that the picture is about a horse, given a picture of a horse,

the program can find other images with related appearances, he said.

It does this by matching the most similar areas and features of an image

and comparing the remaining areas in the query image. In this way, all

the areas of a query image are considered and the similarity of the query

image to the database images is based on the entire image.

“An image with 3 objects is like a set of 3 points, each with a significance,

in the feature space. Now the question is how to match two sets of points,”

said Wang. A region-matching algorithm uses assigned significance of features

in each region to do this, he said. The program’s matches remain consistent

even when query images are rescaled or rotated, he said.

It takes a couple of seconds to put all the images in a database of 200,000

images in order, Wang said. “Based on the interface, the system can provide

a collection of best matches,” he said. According to Wang, the image retrieval

system is more accurate and substantially faster than others available

today.

The image retrieval system is one of several to have emerged in recent

years, said Tomaso Poggio, a professor of Brain Sciences at the Massachusetts

Institute of Technology. Although the system may be an improvement over

previous systems, “I do not see any major breakthrough,” he said. “The

intermediate semantic level makes sense but the list of semantic categories

is arbitrary [from] what I can judge. It may be interesting to try to

ground it in … studies of human subjects… to evaluate whether people do

the task based on semantic level categories,” he added.

The program is currently used in several universities, Wang said. “Most

of them are using SIMPLIcity to search for stock photos, pathology images,

and video frames,” he said. The system could be in wide use within 10

years, he said. It is not likely to ever replace human skills completely,

especially with much bigger image collections, he said. “I would not know

how long it will take to develop a system suitable to search hundreds

of millions of images” such as on the Internet, he said.

The researchers are continuing their search for more efficient image retrieval

techniques, said Wang. “At the same time, we plan to apply our systems

to domains such as biology and medicine.”

Wang's research colleagues were Jia Li at Penn State University and Gio

Wiederhold at Stanford University. They published the research in the

IEEE Transactions On Pattern Analysis And Machine Intelligence, September

2001 issue. The research was funded primarily by the National Science

Foundation (NSF).

Timeline: >10 years

Funding: Government

TRN Categories: Computer Vision and Image Processing; Databases

and Information Retrieval; Pattern Recognition

Story Type: News

Related Elements: Technical paper, "SIMPLIcity: Semantically-Sensitive

Integrated Matching for Picture Libraries" in IEEE Transactions On Pattern

Analysis And Machine Intelligence, September 2001; “Scalable Integrated

Region-based Image Retrieval using IRM and Statistical Clustering,” presented

at the Joint Conference on Digital Libraries (JCDL ’01), held in Roanoke,

VA, June 24-28, 2001.

Offline Editions and Reports TRN Store Feedback Letters Comments About TRN

Find out about TRN Services for Web sites and print publications.

© Copyright Technology Research News, LLC 2000-2003. All rights reserved.